Insights & Resources

The Tensorleap blog

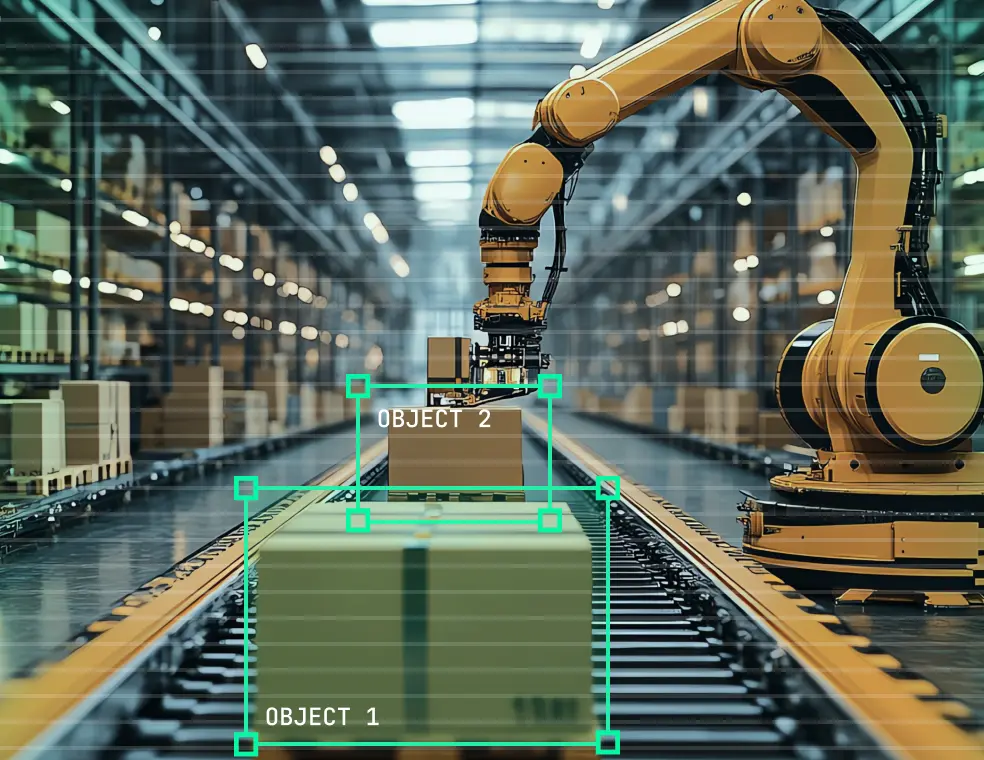

When Robotics Leaves The Lab And Stalls

Robotics teams know the pattern. A system performs reliably in controlled pilots, then struggles the moment it reaches a real factory, warehouse, hospital, or site. Pilots stall. Rollouts freeze. Robots remain in assist mode or shadow deployment far longer than planned. Human overrides become routine. Model updates are delayed because no one is confident they won’t break something else.

What looked like a technical success becomes an operational risk. Production teams lose trust. Safety teams tighten constraints. ROI projections quietly expire. The issue is rarely ambition or belief in robotics- it’s the growing gap between how systems behave in the lab and how they behave when exposed to real environments, real wear, and real people.

Where things break in the real world

Once deployed, robotics systems begin to fail in familiar ways.

Sensors drift. Camera calibration degrades. LiDAR noise profiles shift. Lighting changes across shifts, seasons, and facilities. Models that worked on one line or SKU struggle on the next. Minor layout changes, clutter, or human traffic introduce edge cases no one trained for.

These failures rarely arrive as clean crashes. Performance erodes. False positives increase. Task success rates quietly drop. Operations escalate issues that engineering cannot easily reproduce. Logs show anomalies, not causes.

The robot is still “working”- just not reliably. And because the degradation is gradual, it often goes unnoticed until uptime, safety margins, or throughput are already impacted.

The moment teams realize they’re flying blind

Eventually, a failure occurs that no one can confidently explain.

Was it sensor drift or environment change? A perception gap or a logic error? A data issue or a control issue? The team replays logs, inspects frames, reruns simulations. Nothing clearly points to why the robot behaved the way it did.

At that point, teams confront a harder truth. They can see what happened, but they don’t understand why. They lack causal insight into model behavior, limited ability to intervene safely, and little confidence that the next deployment- or update- won’t introduce a new failure elsewhere.

That’s when progress slows, not because the system can’t improve, but because the team can’t reason about it.

The missing layer in today’s robotics AI stack

Simulation, offline metrics, and task success rates are necessary- but insufficient. What’s missing is an engineering feedback loop between robot behavior and the data and assumptions that drive it.

This is where advanced explainability enters- not as visualization or compliance tooling, but as a control layer for robotics systems.

When applied correctly, explainability enables four critical capabilities:

- Concept and attribution analysis: Understanding what visual, spatial, or sensor concepts the robot actually relies on when acting- and whether those align with engineering intent.

- Domain gap and data coverage analysis: Identifying where real deployment conditions violate training assumptions before failures scale.

- Behavior monitoring in production: Detecting gradual drift in perception or decision-making as sensors age and environments evolve.

- Closed-loop automation: Using insights to drive concrete corrective actions- dataset curation, domain adaptation, synthetic data generation, validation, and redeployment.

This layer doesn’t replace existing tools. It connects them. It turns opaque behavior into actionable signals.

From insight to control: a different development paradigm

With this control layer in place, the robotics development cycle changes.

Failures are traced to specific sensors, concepts, or environments- not guessed at. Drift is detected early, not after operational impact. Model updates are driven by evidence, not fear of regression.

Instead of freezing deployments, teams iterate safely. Instead of firefighting, they close loops: detect → explain → correct → validate. The robot becomes a system that adapts deliberately, not one that silently degrades.

This paradigm adds four operational workflows:

- Drift-aware perception maintenance: Continuously detect sensor and perception drift in production and trigger targeted recalibration or data refresh before performance degrades.

- Site-to-site domain transfer validation: Quantify domain gaps between deployment environments to safely adapt models across factories, warehouses, and facilities.

- Failure-driven dataset curation: Trace recurring failures to missing data regimes and curate only the data needed to correct behavior without over-collecting.

- Safe model evolution and regression control: Validate behavioral changes between model versions to deploy fixes confidently without introducing new failures.

Together, these workflows directly address the pains teams face today: stalled pilots, fragile autonomy, and the constant tradeoff between progress and operational risk.

What changes for teams that get this right

Teams that adopt this paradigm scale beyond pilots faster. They experience fewer production incidents and regain confidence in autonomy. Model updates become routine instead of risky. Robots remain reliable longer, even as environments change.

Most importantly, robotics stops being a collection of brittle deployments and becomes a managed, evolving capability- one that production, safety, and engineering teams can all trust.

A practical next step

Start small. Pick one deployed system and ask a simple question: If this robot fails tomorrow, would we know why?

Assess where sensor drift, domain gaps, or silent degradation could already be happening. Identify whether insights today lead to concrete corrective actions- or just alerts.

You don’t need a full overhaul to begin. But you do need to look at your robots through the lens of control, not just performance.

That shift alone changes what’s possible.